It was weekly demo day with the customer’s business leader.

I had spent the entire week with their operations team, collecting feedback and fixing issues. We were confident we’d shown real progress. We responded to all of their requests and fixed every issue within a day or two. Hopefully that was enough goodwill to keep the engagement going.

I felt good. We were fast. But something beneath my skin told me it wasn’t right. I was anxious, and I couldn’t figure out why.

We kicked off the demo by reiterating our value proposition: We’re here to help you reduce headcount. You won’t need to hire low-wage labor anymore. We’ll save you money.

“Bullshit,” said the customer. “I don’t believe any of that. That’s not what you’re here for. Tell the truth.”

We were stunned.

This had been our pitch from day one. We’d told them AI would automate their workflows, make things easier and cheaper, let them focus on higher-value work. They wouldn’t need to hire data-entry staff.

But just a week earlier, the customer had hired that data-entry person. The role our software was supposed to replace. We noticed it but didn’t think much of it.

Now they were telling us they never believed the pitch. We pushed back: “Look at all the data we processed. Look at the problems we caught. No other solution could have found these.” They glanced at their new hire, then turned back to us. “No, you’re nowhere near what a human can do.”

The conversation went downhill from there. We never recovered, and the deal fell apart.

In retrospect, the customer had an urgent pain, and it was taking too long for our AI to match what a human could do. Which, if we’re being honest, was never really possible in the first place. AI can do certain tasks really well, but doing everything this expert was able to do? That wasn’t going to happen in the timeline they needed. So they hired the person while we were still iterating on bugs. We just didn’t read the signal.

The Cost-Cutting Trap

There’s a lesson here that goes beyond our specific deal, and Dalton Caldwell and Michael Seibel (both former YC partners, now running their own things) explain it well in this video: founders keep making the mistake of pitching their product as a way to cut costs. Customers almost never buy that framing.

I should have known this already. Having pitched projects at multiple companies, I’d seen the pattern firsthand: every time we pitched cost savings, the project never got greenlit. And yet we walked into that customer meeting with the exact same frame.

Here’s an exercise that shows why it doesn’t work. Imagine you can only hire one of two people:

Person A costs $1,000 and will reduce your expenses by $6,000 a year. Person B costs $10,000 and will help you earn an additional $5,000 a year.

Both produce $5,000 in ongoing economic value. Person B is arguably worse on paper because it takes longer to recoup the investment. But if you’re like most people, you’d pick Person B.

When someone tells me I’ll spend less, what I hear is: your life is going to get smaller. Worse house. Worse furniture. Fewer options. But when someone tells me I’ll earn more, I hear room to grow. Maybe I even feel better than my peers because now I make more money.

The lesson: frame the same value as growth, not savings. Simple to say. Hard to remember in the room.

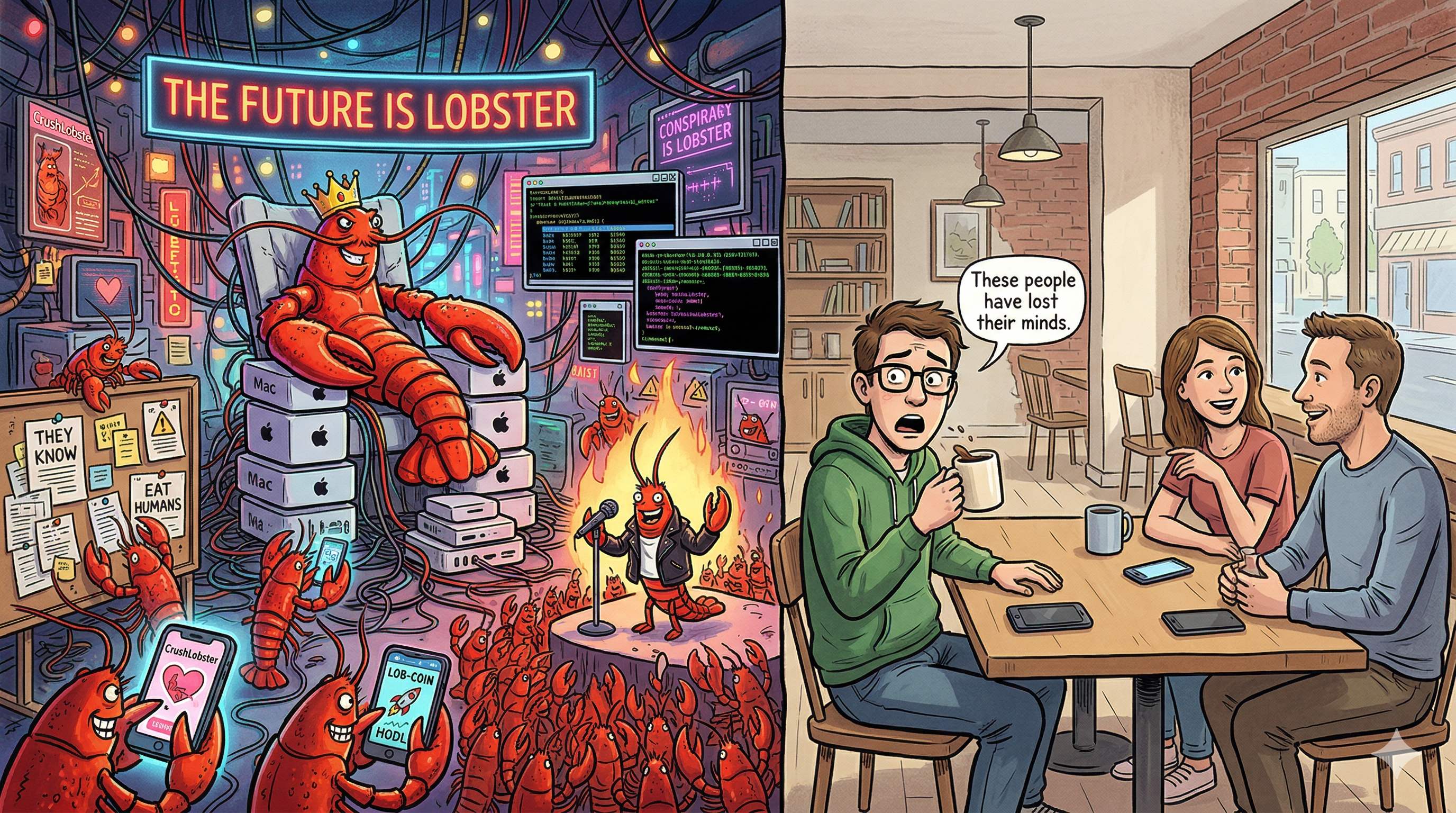

The OpenClaw Illusion

Here’s another lesson I ran into recently. Different context, same kind of blind spot.

OpenClaw has been everywhere lately. The open-source AI agent now has more than 300,000 GitHub stars. I was an early adopter. I set up my own agent and introduced it to a group chat with friends. They went wild with it. They had it make judgments about everyone in the group, interrogated it, tried to trip it up with trick questions, basically pushed the AI to every boundary they could find. There was so much engagement. Soon each of them set up their own agent, and we all agreed: in the future, everyone’s going to have one of these.

Then I introduced the same agent to a different group of friends. Crickets. They asked a few generic questions, basically treating it like a worse ChatGPT, and never touched it again. In real life, when I’d talk to one friend from this group about how great AI is and how it’s going to change everything, he’d just look at me. Like, you keep talking about how wonderful this is, and I just don’t see it.

When technical people on my feed started talking about making OpenClaw easier to set up for “normal people,” my mind kept going back to that friend.

At some point, a non-technical person actually asked me to set up an agent for them. I went ahead and did it, but after asking a few questions, they never used it again.

The Bias Behind All of This

A lot of technical people share this mental model: they find something amazing, look around, and see everyone else falling behind. The conclusion seems obvious. If only they could use what I use, their lives would be so much better. The only thing stopping them is that it’s too hard to set up. So I just need to make it easier.

There’s a name for this: the false consensus effect. Psychologists Lee Ross, David Greene, and Pamela House identified it in 1977. It’s the tendency to overestimate how much others share your beliefs and preferences. We assume our own experience is normal, and when we find out it isn’t, we tend to decide the other people are the ones with the problem.

I fell into this over and over again. When I’d hear people describe their pain points in various industries, my first thought was always: ChatGPT could solve 80% of this. So I’d ask them how they used ChatGPT. The answer was always some version of: “We use it as a glorified Google.” They had the tools. They just didn’t have the knowledge or the motivation to actually leverage them.

Why Problems Don’t Mean Customers

One mistake founders make when they hear people’s problems is assuming that those people will pay for a solution. They think: if I built something that solved this for you, you’d buy it. What they forget to appreciate is a couple of things.

First, when people complain about a problem, it’s usually because the problem is still there and they’re still complaining. That means it’s not painful enough for them to go solve it themselves. They keep the problem around so they have something to complain about.

Second, when you ask people about their problems, they’ll produce problems they think you can solve. They’re responding to the frame of “imagine a solution exists.” The better question isn’t “What are your biggest pain points?” It’s “How do you do this thing today?” Watch what they actually do, not what they say they wish was different.

And then there’s a third thing, which is about what actually drive

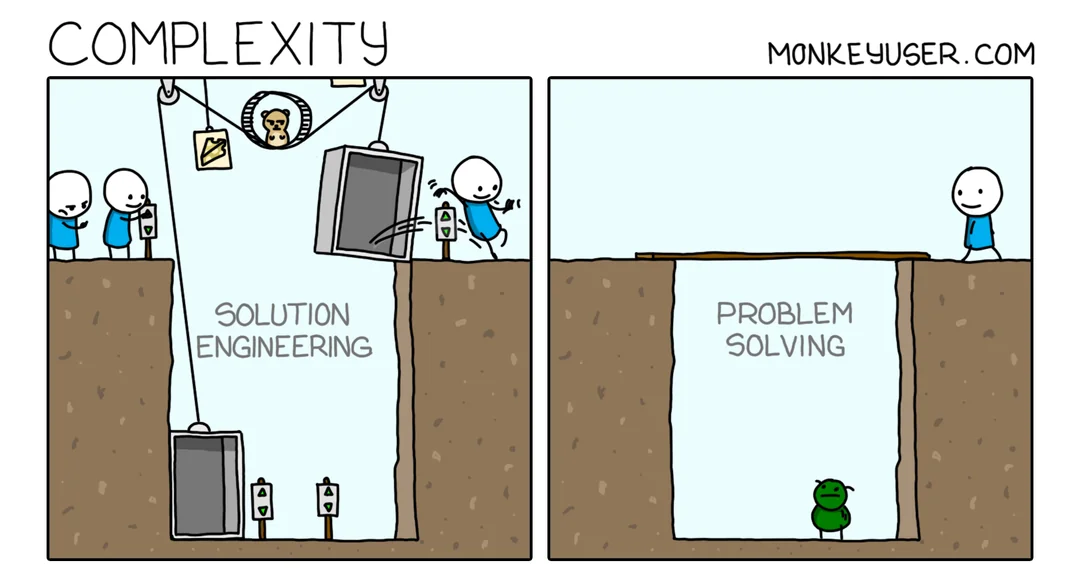

Where the Joy Actually Comes From

Here’s what technical people miss about their own automation projects: the main reason it feels good isn’t that the work got easier. That’s part of it, sure. But most of the satisfaction comes from the process itself. The building. The cleverness. The feeling that you made something that works.

When you automate a ten-step process into one step and can’t wait to show somebody, what are you actually saying? You’re not saying “Look, I saved nine steps.” You’re saying “Look at this thing I built.” The pride is in the craft, not the time saved.

Be honest: if you’ve set up an OpenClaw instance, how much time has it actually saved you, and how much satisfaction came from building it and showing it off?

When a technical person tries to automate the work of a non-technical person, they miss this completely. The non-technical person doesn’t feel joy from fewer steps. What they feel is frustration that someone is changing their workflow again. They think: Here comes another tech person who thinks they can do my job better than I can.

And here’s the twist. When a non-technical person does want something automated, the task is usually tedious and boring. It might be really valuable to the person asking, but a technical person often won’t want to build it because there’s no craft in it for them.

Try this: think about the last time a completely non-technical person asked you to automate something. How excited were you about that versus something you built for yourself?

Solve Your Own Problem

I think this is why YC partners tend to recommend that founders solve their own problems, not problems they observe other people having.

When you solve your own problem, you’re naturally in the shoes of people like you. People who feel the same pain and want the same fix. You understand the motivation because it’s yours.

When you try to solve a problem you observe other people having but don’t have yourself, you’re already selecting for a mismatch. Think about it: if you noticed the problem but didn’t have it yourself — like difficulty setting up OpenClaw — it means the problem was trivial to you. The people who do struggle with it are telling you something important: they probably don’t want the thing badly enough to push through. If they did, they’d be more like you — someone who just figured it out. Making it easier to set up doesn’t turn indifferent people into enthusiasts.

What’s Next

More than two years in, I’ve learned a lot. I’m working on a new problem. I’m not ready to talk about it yet, but I’ve tried to fold every lesson from this journey into what I’m building now.

One more chance to learn. Fingers crossed this time it sticks.

If you’re a founder who’s made these same mistakes, or if you’ve found your way past them, I’d like to hear from you.